Converging on useful solutions

You can’t always get what you want. But if you try, sometimes you find you get what you need - Mick Jagger, Rolling Stones

Product development efforts must balance risks and opportunities over time. To do that, each team must learn how to quickly deliver a barely adequate, minimum viable product so that first mover advantages can be exploited. Any business that fails to achieve this target may not get another chance. Endeavors encounter trouble regardless of which direction they miss their target- whether failing to provide sufficient value to their launch customers or by accumulating excessive unrealized inventory valuation that ends up never being monetized; both misses result in poor returns on investments, loss of credibility, and a smaller footprint for success going forward.

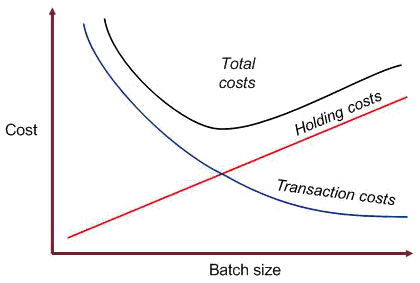

Once offerings are placed into service, existing resources need to begin be allocated to improve the quality and functionality of the product which may have been viable, but is now on life support. These improvements become the focus of subsequent iterative cycles in order to refine, shape, and extend the value of the solution for a broader range of customers. As figure 1 shows, the size of the batches packaging this value has a direct relationship on the holding costs for the business, which are a function of the availability of the information, material, and resources necessary for future investments.

As an example, in Managing the Design Factory, Don Reinertsen highlights how such batch size tradeoffs can play out in protracted test cycles:

Let us define the batch size of our testing process to be the amount of design work that we complete before we start testing. The largest batch size would be waiting for the entire design to be complete before we start the test. This will minimize the number of tests, but we will discover our defects very late in the design process, when it is expensive to react to them. In contrast, if we test a partially complete design, we deliver the information associated with the test earlier. Our problem is to balance the cost of extra test cycles against the cost of making expensive changes late in the design cycle.

Faster iterations enable multiple efforts to search for value in parallel with the decision cycles framed by their circumstances. Timeboxing of these iterations facilitates the exploration of more alternatives within a given timeframe and provides opportunities for more effective evaluations to be performed earlier, to determine which ones are worth saving, so the rest can be pruned out without a lot of collateral damage. These adaptation tactics form an essential practice for businesses which enables convergence of fitness for use through incremental cycles of learning. This convergence is effective even when performed within a constantly changing environment, as long as the team’s agility is faster than the pace of environmental change.

In the initial iterative cycle, the initial conditions, transformations, and context may all be flawed, but it is necessary to be able to tell the difference. We would like to be able to reduce uncertainty and improve our projections of the work to go until our original commitments will be achieved; the earlier and more frequently we obtain information about that target and our path towards it, the better off we will be.

As products come and go, those that glitter may not subsequently be found to contain gold. The evaluation of opportunities must be adequately framed, so that evaluation and prioritization can be performed competently. Implementation then requires a series of transformations across multiple planning horizons, disciplines, organizations, product breakdown structures, and work breakdown structures. Control of batch sizes is critical to enable feedback to be incorporated with each cycle of these transformations, unlocking tremendous efficiencies:

Author Rose George explores the taken-for-granted environment of containerization around the world, and its often opaque ownership structures, regulations, and inhumane working conditions. The world of container ships has everything sized in terms of twenty-foot equivalent units. Ninety percent of our modern world's consumption travels by these means, and make it possible to send a can of beer around the world for about a penny. As the book describes, this creates odd incentives which make it cheaper catch fish in Scotland, freeze them and ship them to China, filleted them, re-freeze them, and shipped them back to Scotland, where they are sold in a Scottish grocery store at less than had the processing all have been done locally by Scottish workers.

Erhardt Reichlin, author of The Art of Systems Architecting, describes how to achieve this progressive elaboration - by reducing batches and increasing iterations - as follows:

Stepwise refinement is the progressive removal of abstraction in models, evaluation criteria, and goals. It is accompanied by an increase in the specificity and volume of information recorded about the system, and a flow of work from generalized to specialized design disciplines. Within the design disciplines the pattern repeats as disciplinary objectives and requirements are converted into the models of form of that discipline. In practice, the process is neither so smooth or continuous. It is better characterized as episodic, with episodes of abstraction reduction alternating with episodes of reflection and purpose expansion.

Since the value of information uncovered during this pursuit quickly decays, it must be acted on quickly. While more information seems preferable to less, in practice, information is only trusted in decision-making when it is accurate, meaningful, and relevant to the situation. The groups responsible for accomplishing this stepwise refinement need sufficient capacity to respond to latent demand while having effective channels to return work packages that are not sufficiently developed for realization.

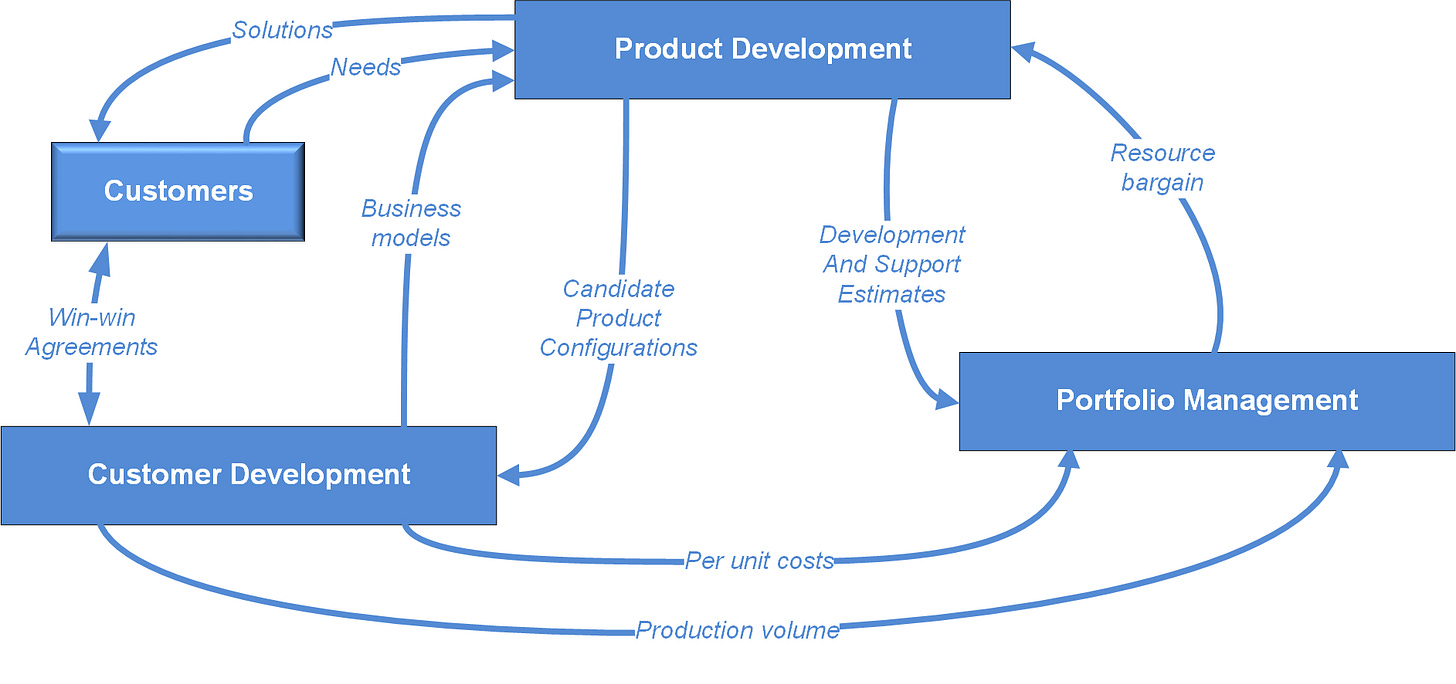

who are the customers?

what do they need in order for business objectives to be realized?

what functions must be provided by solutions to fulfill these needs?

how well must solutions perform for these functions to meet expectations for cost, quality, and schedule?

which requirements are necessary to characterize acceptable solutions in order to focus and manage development?

how will the uncertainty of achieving these requirements within the negotiated resource bargain be managed?

Here is Stanford Beer on such resource bargains:

The Resource Bargain is the ‘deal’ by which some degree of autonomy is agreed between the Senior Management and its junior counterparts. The bargain declares: out of all the activities that System One elements might undertake, THESE will be tackled (and not THOSE), and the resources negotiated to those ends will be provided.

However autocratic or democratic (or even anarchic) your Resource Bargaining proves to be, the governing mode of management is Accountability. Please think about this responsibility for resources provided in terms (not of financial probity, not of emotional dependency, but) of variety engineering. Can you possibly itemize every single thing that the subsidiary does, demand a report on it, and expect a justification? Obviously not. Therefore accountability is an attenuation of high-variety happenings [and] investment is a variety attenuator.

EXAMINE precisely how accountability is exercised and especially what attenuators (totals, averages, key indicators) are used. If in the end, you are appalled to discover that the machinery is inadequate, that Senior Management just does not have Requisite Variety, then you had better own up.

The discipline of triage is essential to close any gaps discovered between the identified problem and solution spaces and establish situational awareness for aligning the viewpoints of design, production, and support systems. Gaps have different levels of importance for different stakeholders, and these stakeholders have different interests at different points in their system’s lifecycle.

OODA loops have proven to be effective constructs to shape a cyclic set of activities for organizing the pursuit of agility over these cycles, especially in high-pressure environments. Chet Richards positioned this framework as a strategic framework to progressively align actions to changing environments: “Strategy is a mental tapestry of changing intentions for harmonizing and focusing our efforts as a basis for realizing some aim or purpose in an unfolding and often unforeseen world of many bewildering events and many contending interests.”

Each cycle of iteration of these transformations conceptually can be divided into 4 phases:

Orientation

Orientation begins by performing a situational assessment which involves the exploration and framing of the perceptions of actors, the states of objects they are focused on, and the significance of events which shape those states, as each object interacts within a broader context. The goal should be to develop a neutral understanding of the collective intentions of stakeholders relative to the environment and conditions which present themselves. It is usually helpful to use a set of organizing questions to help shape the collection of this information in order to establish connections between actions and consequences, by projecting not only what is happening, but how the situation is likely to unfold over time under different scenarios.

Observation

Given this orientation, observations are collected by actively acquiring information from available sources. In philosophical terms, it is the process of filtering sensory information through a mental process. In science, observation additionally involves the recording of data via the use of instruments. Nearly all observations will be a mixture of objective and subjective assessments; these observations should include context, learnings, and an innate “feel” for situations, people, and events. It should be recognized that each of these is influenced by a mix of variables, some within the subject’s control, and others outside it. The underlying goal of both activities is to synthesize a mental operational definition that is grounded in facts and whose interpretation (or meaning) is consistent with observations. There is sometimes a question in data processing as to where “observing” ends and “drawing conclusions” begins, though the boundary typically involves recognizing intensions, and evaluating options for aligning behaviors and intentions. Casual analysis can also be helpful, if time permits.

Decision-making

Decisions require appropriate framing in order to make balanced trade-offs between target outcomes, time, resources, and risk. A major part of this decision-making involves identification and analysis of a finite set of alternatives that can be evaluated using a limited set of criteria The speed within which decisions must be made is likely to determine the decision process involved; decision methods such as that used by the military are thorough but time-consuming. When the observer is the decision-maker, this task might vary according to whether the decision must be made intuitively or intellectually. If the latter, one must rank these alternatives in terms of how they are perceived to impact target outcomes, both in isolation and as interactions between the criteria when all are considered simultaneously. When decisions instead are to be made by a group, adoption of a more formal decision process should be considered.

Taking Action

Actions are necessary to establish momentum and begin achieving progress towards objectives. In military operations, the objectives are sent as an operations order. The sooner one can begin getting started, the faster one can begin learning. Of course, one can’t initiate just any action; it must be purposeful, conscious, and focused on making progress. Tyler Cowen stresses the importance of taking action even in the face of limited data: “The more information that’s out there, the greater the returns from just being willing to sit down and apply yourself. Information isn’t what’s scarce; it’s the willingness to do something with it.”

As Kyle Eschenroeder says, “we need to rebalance our appreciation for the power of action with our tendency to overthink, over-plan, and otherwise waste our energies in abstraction.” The work of Fernando Flores has been instrumental in guiding how to transform an existing chain of transactions into a lean and efficient set of organizational capabilities through learning. The pace of this learning depends on how quickly and effectively provider and customer concerns about the necessity and sufficiency of provided capabilities can be fulfilled:

A process can be improved if it can be understood; it can be understood only if it has a consistent structure [Senge 1990]; and its structure can be consistent only after the first steps of process improvement have reduced process variability... Many processes exhibited such broad variation in behavior that it was difficult for process specifiers to agree on a process that represented the typical scenario. Many organizations informally built process specifications from anecdotal process experience instead of developing the baseline process model with empirical models and data. Many organizations... created an ideal specification instead of capturing empirical practices, and organizations used these specifications as a baseline for improvement despite the mismatch. Because many process specification models were divorced from empirical practice, they could not reliably drive real development practice.

Organizations can track the effectiveness of their decision-making by measuring the momentum at which benefits are being realized from investments, rather than by measuring the velocity of the teams who are doing the work. Preparation for this realization capture requires orientation of both the context of customer concerns and the utility of solutions being deployed by service providers.

In Stafford Beer’s Viable System Model, the multinode is the structural and cognitive configuration of the organization’s “identity function” when decision-making is not the result of a single person but a collective mind. It is Beer’s way of describing how multiple individuals can jointly enact the role of ultimate policy, coherence, and long‑term direction. His comments on this notion illuminate convergence:

We claim we know how the whole thing works. The problem is to make it work more quickly. That must surely mean the introduction of discipline and order, of some sort, into the situation. It also means, however, that no measures may be adopted which would at the same time put the remarkable freedom of action and the wonderful flexibility of the multinode in jeopardy. If people could see how to do this, without putting themselves and their organization into a strait-jacket, there is some chance that they would adopt new techniques. One method, we ought now to agree, must be excluded although it is the one method most usually attempted in practice, because no one can think of anything else. This is the method of rigorous protocol. Explicitly: it denatures the system itself - with all its in-built capacity to generate the right answers.

The first difficulty is to know what kind of problem the multinode actually solves. It does not devote itself, its seniority and power, to the determination of trivial outcomes or it ought not to do so. It is likely to be settling a policy of great importance and therefore considerable complexity. Thus it is that people think of thinking as a process of synthesizing an integral but elaborate conclusion from a large number of component parts. The decision is seen as a rococo edifice built up clause by legal clause. This is perhaps why there are endless drafting problems facing anyone trying to promulgate an agreed decision.

The cybernetician adopts the contrary position. The output of the thinking process, the decision, has the following form: do this (rather than anything else). When the process of thinking originally starts, the multinode is faced not indeed with a number of building bricks in an edifice but with a seemingly infinite number of possible outcomes between which it must choose. It is the existence of this plethora of possibilities that cries out for decision in the first place. Then, under this model, the process we seek to assist is one of chopping down ambiguities and uncertainties until we may say: do this. In short, we would like to measure the variety of the complex decision at the start, measure the reduction of variety brought about by each conclusion reached in the process of thinking things out, and in general monitor the entire operation of the multinode as the variety comes down to a value of the decision itself. To do this we shall need two tools: a paradigm of logical search, and an actual metric - a rule and a scale - for measuring uncertainty.

The speed of this search is the critical discriminator between groups that can deliver sustainable, exemplary performance and those who just get by. Those in the latter class are implicitly condemned to a death by a thousand cuts.